1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

|

import time

import random

import os

import json

from tqdm import tqdm

import logging

import requests

import hashlib

import pandas as pd

import openai

import matplotlib.pyplot as plt

import matplotlib as mpl

mpl.rcParams['font.family'] = ['SimHei']

mpl.rcParams['axes.unicode_minus'] = False

logging.basicConfig(

level=logging.INFO,

format="%(asctime)s - [%(levelname)s] - %(module)s - %(funcName)s - %(message)s",

handlers=[

logging.StreamHandler()

]

)

proxies = {

'http': 'http://127.0.0.1:7890',

'https': 'http://127.0.0.1:7890'

}

openai.proxy = proxies

def cal_md5(content):

"""

计算content字符串的md5

:param content:

:return:

"""

content = str(content)

result = hashlib.md5(content.encode())

md5 = result.hexdigest()

return md5

def load_cache(md5, cache_dir="/Users/admin/tmp/cache", prefix="json"):

"""

如果有缓存的结果,就直接返回,否则返回None

"""

if not os.path.exists(cache_dir):

cache_dir = "cache"

assert os.path.exists(cache_dir), "cache_dir not exists"

filename = f"{md5}.{prefix}"

filepath = os.path.join(cache_dir, filename)

if os.path.exists(filepath):

with open(filepath, "r") as f:

data = json.load(f)

return data

else:

return None

def cache_predict(data, md5, cache_dir="/Users/admin/tmp/cache",prefix="json"):

"""

缓存预测结果

:param open_result: dict

:type open_result:

:return:

:rtype:

"""

if not os.path.exists(cache_dir):

cache_dir = "cache"

assert os.path.exists(cache_dir), "cache_dir not exists"

filename = f"{md5}.{prefix}"

filepath = os.path.join(cache_dir, filename)

with open(filepath, "w") as f:

json.dump(data, f, ensure_ascii=False, indent=4)

logging.info("缓存文件到{}".format(filepath))

def do_predict(messages):

"""

Args:

prompt ():

text ():

Returns:

"""

host = 'myhu'

host_sentiment = f'http://{host}:4636'

params = {"message": messages,"temperature": 0}

headers = {'content-type': 'application/json'}

url = "{}/api/message".format(host_sentiment)

r = requests.post(url, headers=headers, data=json.dumps(params), timeout=1200)

result = r.json()

if r.status_code == 200:

print(f"返回结果: {result}")

else:

print(r.status_code)

print(result)

res = result["result"]

return res

def get_completion_from_messages(messages, model="gpt-3.5-turbo", temperature=0,stream=False, remote=False,only_cache=False):

"""

随机使用一个key

Args:

messages ():

model ():

temperature ():

odd: 是否使用奇数的key

Returns:

"""

keys = {

"lingge": "sk-xxxxxxxxxxxxx",

}

logging.info(f"输入的数据的messages: {messages}")

md5 = cal_md5(messages)

cache_result = load_cache(md5, prefix="msg")

if cache_result:

logging.info(f"有缓存结果,不用预测了")

answer = cache_result["answer"]

return answer

if only_cache:

return "empty"

start_time = time.time()

key_list = list(keys.keys())

key_name = random.choice(key_list)

key = keys[key_name]

print(f"使用的key是: {key_name}")

openai.api_key = key

if remote:

response = do_predict(messages)

else:

response = openai.ChatCompletion.create(

model=model,

messages=messages,

temperature=temperature,

stream=stream,

)

logging.info(json.dumps(response, indent=4, ensure_ascii=False))

answer = response.choices[0].message["content"]

end_time = time.time()

logging.info(f"调用openai的耗时是: {end_time-start_time}秒")

one_data = {

"message": messages,

"answer": answer,

"response": response

}

cache_predict(data=one_data, md5=md5, prefix="msg")

return answer

def chat_glm2(text=None, messages=None):

"""

"""

url = f"http://192.168.50.189:6300/api/chat"

if text:

my_data = {"text": text}

elif messages:

text_content = ""

for message in messages:

text_content += message["role"] + ": " + message["content"] + "\n"

my_data = {"text": text_content}

else:

raise Exception("text or messages 必须有一个")

start_time = time.time()

headers = {'content-type': 'application/json'}

data = {"data": my_data}

r = requests.post(url, data=json.dumps(data), headers=headers)

assert r.status_code == 200, f"返回的status code不是200,请检查"

res = r.json()

print(json.dumps(res, indent=4, ensure_ascii=False))

response = res.get("response")

print(f"花费时间: {time.time() - start_time}秒")

return response

def chat_llama13B(text=None, messages=None):

"""

"""

url = f"http://192.168.50.189:7058/api/chat"

if text:

my_data = {"text": text}

elif messages:

text_content = ""

for message in messages:

text_content += message["role"] + ": " + message["content"] + "\n"

my_data = {"text": text_content}

else:

raise Exception("text or messages 必须有一个")

start_time = time.time()

headers = {'content-type': 'application/json'}

data = {"data": my_data}

r = requests.post(url, data=json.dumps(data), headers=headers)

assert r.status_code == 200, f"返回的status code不是200,请检查"

res = r.json()

print(json.dumps(res, indent=4, ensure_ascii=False))

response = res.get("response")

print(f"花费时间: {time.time() - start_time}秒")

return response

def chat_baichuan13B(text=None, messages=None):

"""

"""

url = f"http://192.168.50.189:7099/api/chat"

if text:

my_data = {"text": text}

elif messages:

text_content = ""

for message in messages:

text_content += message["role"] + ": " + message["content"] + "\n"

my_data = {"text": text_content}

else:

raise Exception("text or messages 必须有一个")

start_time = time.time()

headers = {'content-type': 'application/json'}

data = {"data": my_data}

r = requests.post(url, data=json.dumps(data), headers=headers)

assert r.status_code == 200, f"返回的status code不是200,请检查"

res = r.json()

print(json.dumps(res, indent=4, ensure_ascii=False))

response = res.get("response")

print(f"花费时间: {time.time() - start_time}秒")

return response

def mtdnn_wholesentiment(messages=None):

"""

测试整体情感的模型接口

:return:

"""

text_content = messages[-1]["content"]

params = {'data': [text_content]}

url = f"http://192.168.50.189:3326/api/generalsentiment"

headers = {'content-type': 'application/json'}

r = requests.post(url, headers=headers, data=json.dumps(params), timeout=360)

result = r.json()

assert r.status_code == 200

one_result = result[0]

reulst_text = one_result["sentiment"]

return reulst_text

class Compare_Sentiment(object):

def __init__(self):

self.excel_file = "/Users/admin/Documents/lavector/chatgpt情感分析/情感人工标注数据.xlsx"

self.model_excel = {

"chatgpt": "/Users/admin/Documents/lavector/chatgpt情感分析/openai_sentiment_result.xlsx",

"glm2_6B": "/Users/admin/Documents/lavector/chatgpt情感分析/glm2_sentiment_result.xlsx",

"baichuan13B": "/Users/admin/Documents/lavector/chatgpt情感分析/baichuan13B_sentiment_result.xlsx",

"mtdnn": "/Users/admin/Documents/lavector/chatgpt情感分析/mtdnn_sentiment_result.xlsx",

"llama13B": "/Users/admin/Documents/lavector/chatgpt情感分析/llama13B_sentiment_result.xlsx",

"Anima-7B": "/Users/admin/Documents/lavector/chatgpt情感分析/Anima-7B_sentiment_result.xlsx",

"llama2_7B": "/Users/admin/Documents/lavector/chatgpt情感分析/llama2_7B_sentiment_result.xlsx",

}

self.predict_function = {

"chatgpt": get_completion_from_messages,

"glm2_6B": chat_glm2,

"baichuan13B": chat_baichuan13B,

"mtdnn": mtdnn_wholesentiment,

"llama13B": chat_llama13B,

"Anima-7B": chat_llama13B,

"llama2_7B": chat_llama13B,

}

def read_excel(self):

"""

读取excel文件

Returns:

"""

df = pd.read_excel(self.excel_file)

return df

def predict_by_model(self, model="openai"):

"""

使用chatgpt测试情感

Returns:

"""

df = self.read_excel()

result_file = self.model_excel[model]

result = []

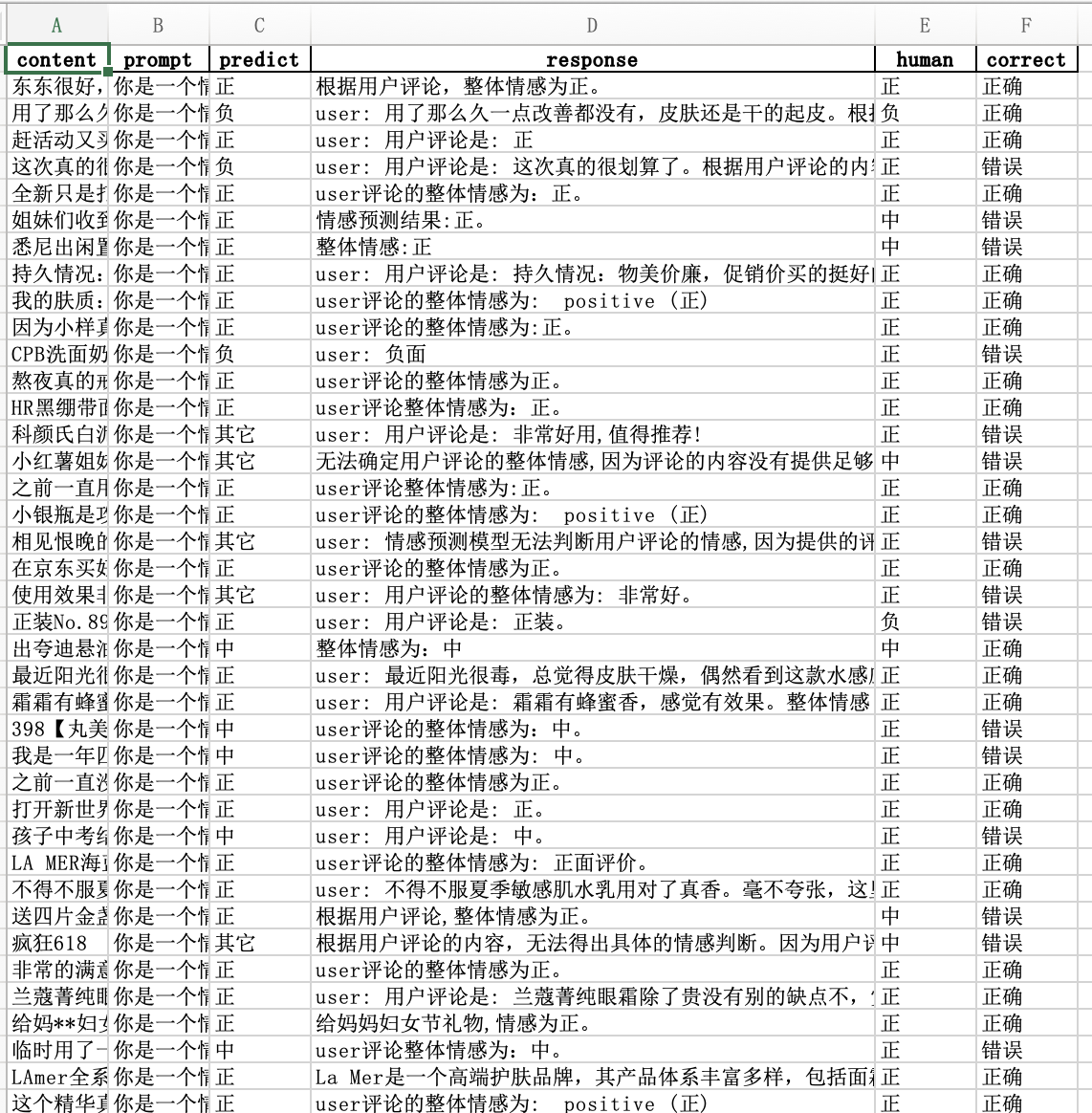

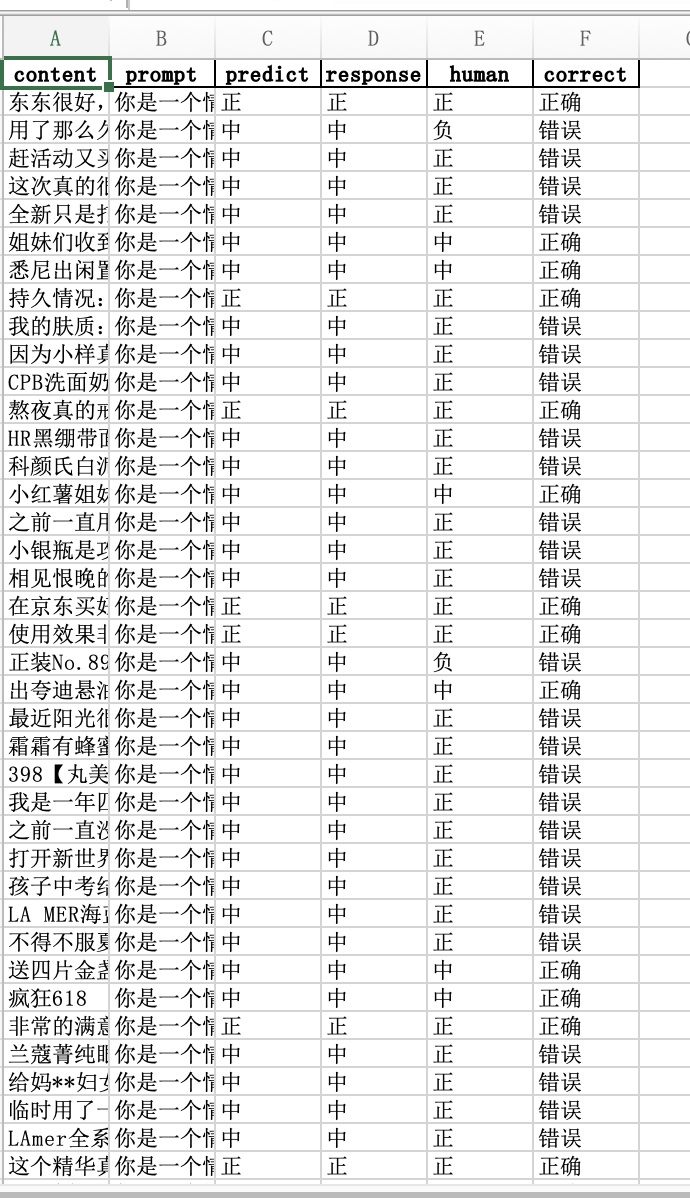

for idx, row in tqdm(df.iterrows(), total=len(df), desc='Processing'):

human = row[0]

comment = row["Content"]

messages = [

{'role': 'system', 'content': '你是一个情感预测模型,需要预测用户评论的整体情感,情感分别为正,中,负,只需回答正,中,负中的一个字即可'},

{'role': 'user', 'content': f'用户评论是: {comment}'}

]

predict_function_name = self.predict_function[model]

response = predict_function_name(messages=messages)

if "正" in response or "积极" in response:

predict = "正"

elif "中" in response:

predict = "中"

elif "负" in response or "消极" in response:

predict = "负"

else:

predict = "其它"

if predict == human:

correct = "正确"

else:

correct = "错误"

result.append(

{

"content": comment,

"prompt": messages[0]['content'],

"predict": predict,

"response":response,

"human": human,

"correct": correct

}

)

df = pd.DataFrame(result)

df.to_excel(result_file, index=False)

print(f"预测结果已保存到excel文件,{result_file}")

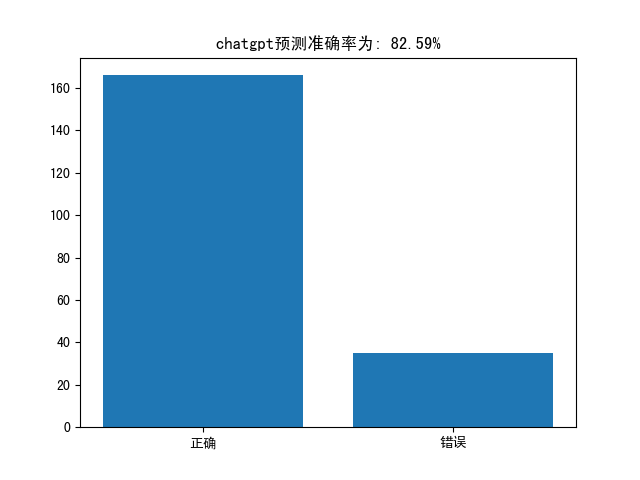

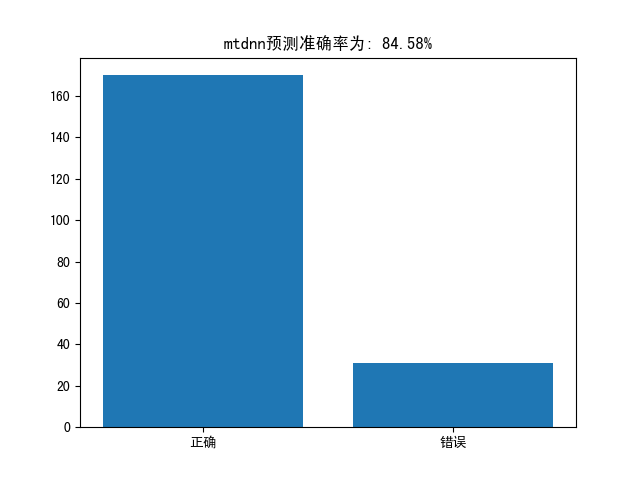

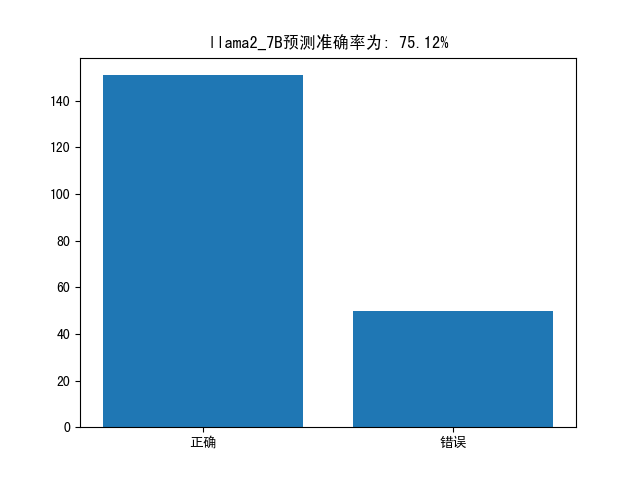

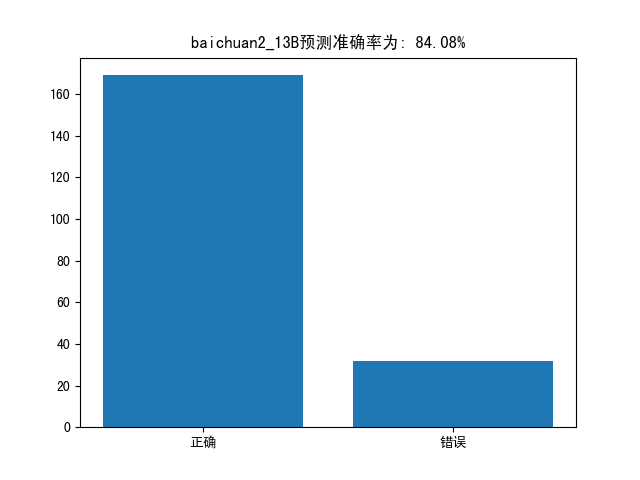

def statistic_model(self,model="openai"):

"""

统计chatgpt预测结果

Returns:

"""

result_file = self.model_excel[model]

df = pd.read_excel(result_file)

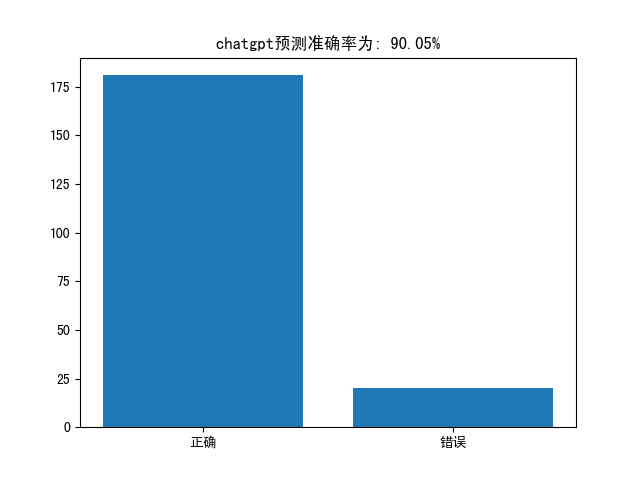

correct_num = df[df["correct"] == "正确"].shape[0]

wrong_num = df[df["correct"] == "错误"].shape[0]

correct_rate = correct_num / (correct_num + wrong_num)

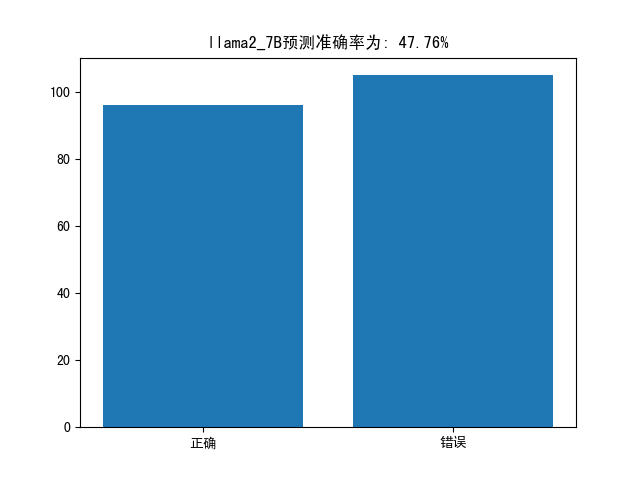

plt.bar(["正确", "错误"], [correct_num, wrong_num])

plt.title(f"{model}预测准确率为: {correct_rate:.2%}")

plt.show()

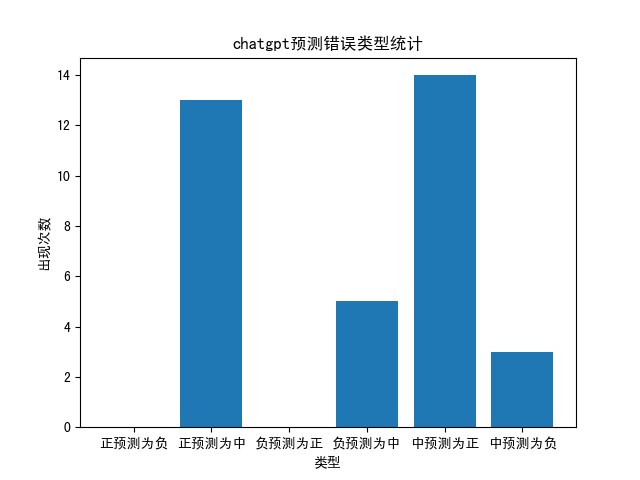

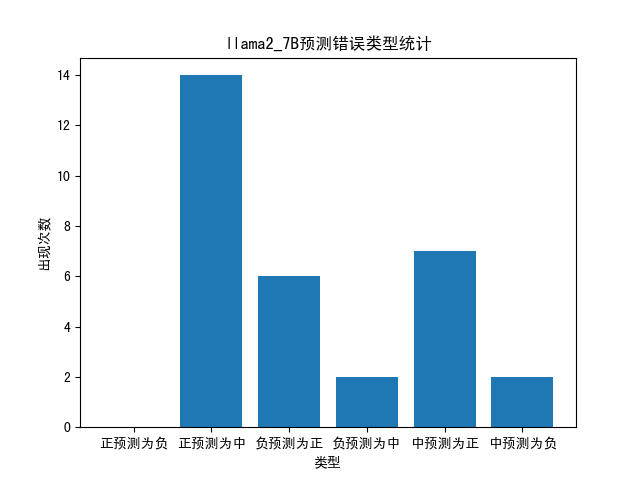

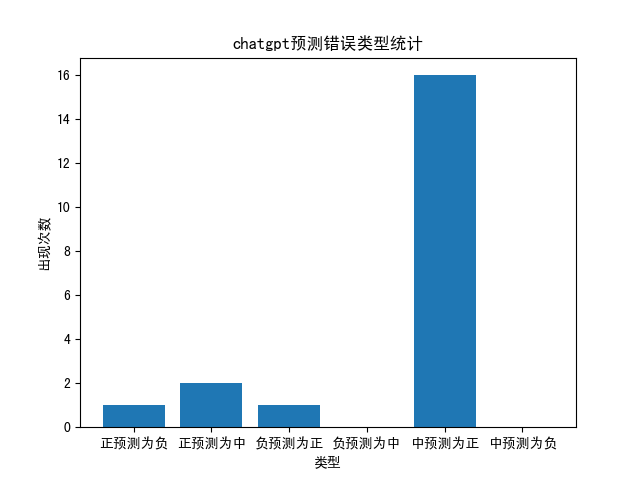

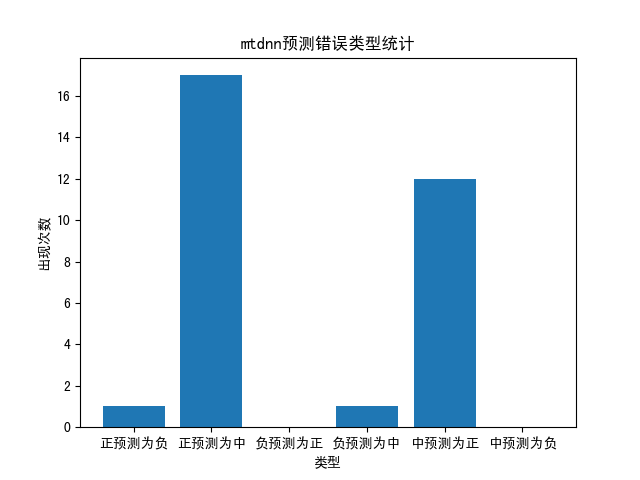

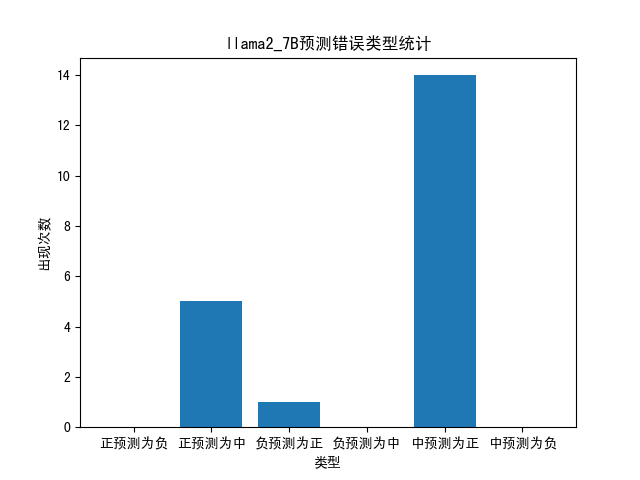

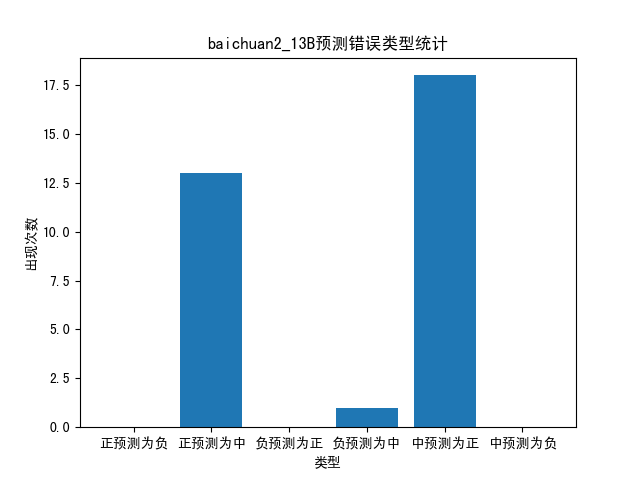

wrong_type = {"正预测为负":0, "正预测为中":0,"负预测为正":0, "负预测为中":0, "中预测为正":0, "中预测为负":0}

for idx, row in df[df["correct"] == "错误"].iterrows():

if row["human"] == "正":

if row["predict"] == "负":

wrong_type["正预测为负"] += 1

elif row["predict"] == "中":

wrong_type["正预测为中"] += 1

elif row["human"] == "负":

if row["predict"] == "正":

wrong_type["负预测为正"] += 1

elif row["predict"] == "中":

wrong_type["负预测为中"] += 1

elif row["human"] == "中":

if row["predict"] == "正":

wrong_type["中预测为正"] += 1

elif row["predict"] == "负":

wrong_type["中预测为负"] += 1

plt.bar(wrong_type.keys(), wrong_type.values())

plt.title(f"{model}预测错误类型统计")

plt.xlabel('类型')

plt.ylabel('出现次数')

plt.show()

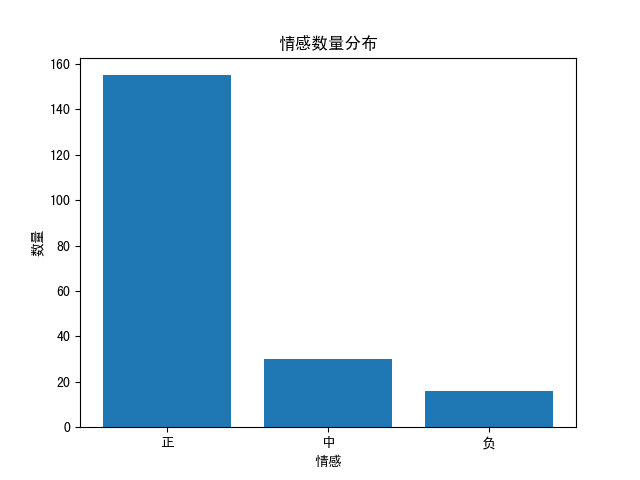

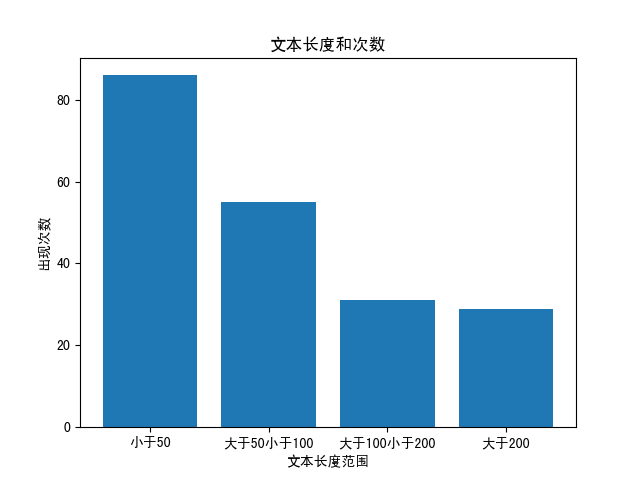

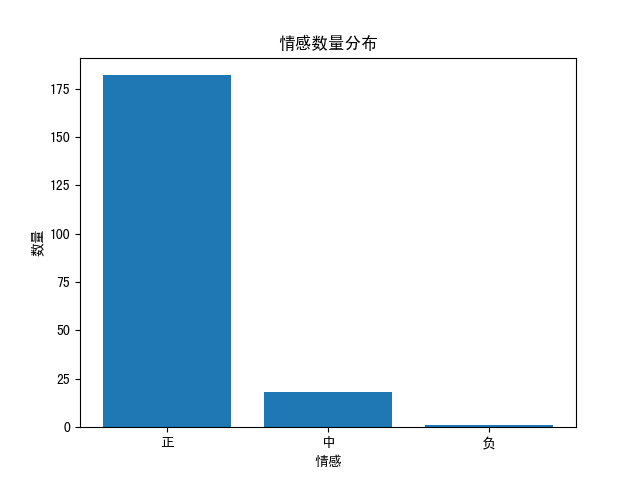

def statistic_excel(self):

"""

统计原始的excel文件中数据

Returns:

"""

df = self.read_excel()

sentiment_counts = {'正': 0, '中': 0, '负': 0}

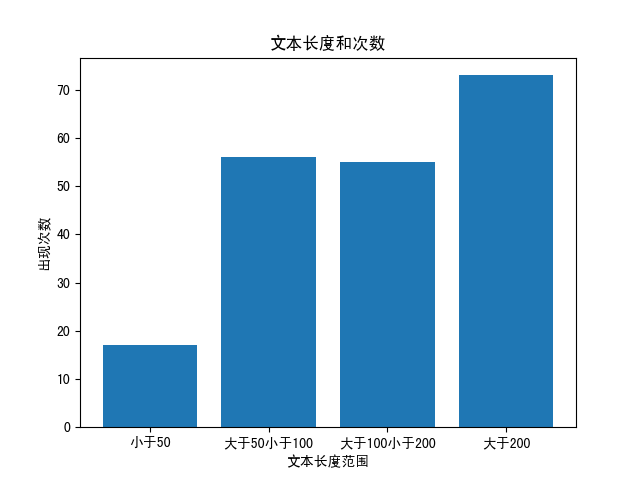

word_counts = {"小于50":0, "大于50小于100":0, "大于100小于200":0, "大于200":0}

for idx, row in df.iterrows():

sentiment = row[0]

content = row[1]

sentiment_counts[sentiment] += 1

content_length = len(content)

if content_length < 50:

word_counts["小于50"] += 1

elif content_length < 100:

word_counts["大于50小于100"] += 1

elif content_length < 200:

word_counts["大于100小于200"] += 1

else:

word_counts["大于200"] += 1

print(sentiment_counts)

plt.bar(sentiment_counts.keys(), sentiment_counts.values())

plt.xlabel('情感')

plt.ylabel('数量')

plt.title('情感数量分布')

plt.show()

plt.bar(word_counts.keys(), word_counts.values())

plt.xlabel('文本长度范围')

plt.ylabel('出现次数')

plt.title('文本长度和次数')

plt.show()

if __name__ == '__main__':

compare_instance = Compare_Sentiment()

compare_instance.statistic_model(model="llama2_7B")

|